Americans Hate AI

SuperBowl ads and artificial intelligence's severe public image problem.

Artificial intelligence has a severe public image problem.

The average American despises AI.

57% of Americans rate the risks of AI as high versus 23% for benefits.

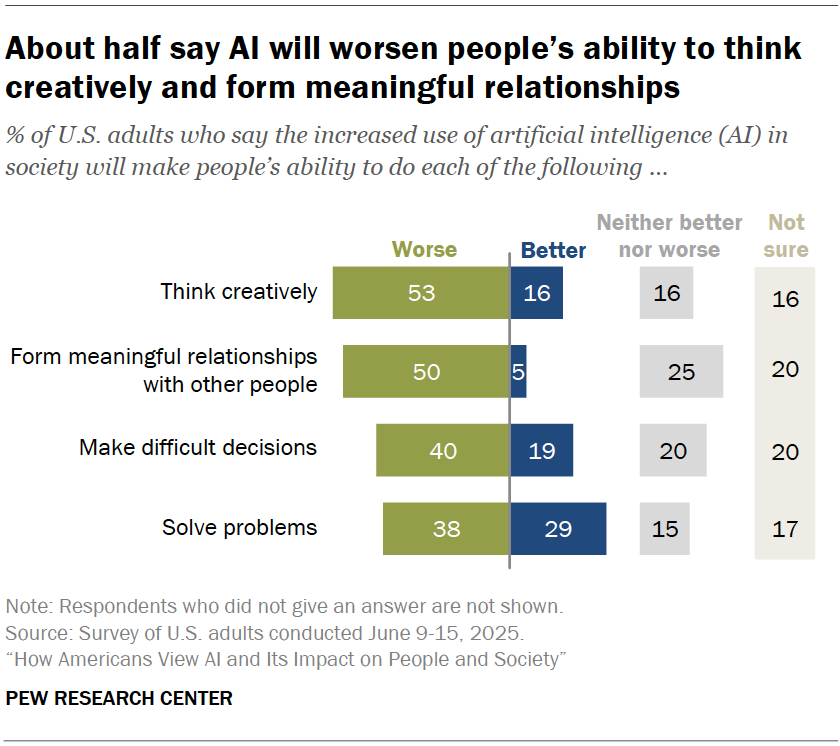

The most common reason was the “concern about AI eroding human abilities and connections” (27%)

The second at 18% was the “negative impact on accuracy of information”

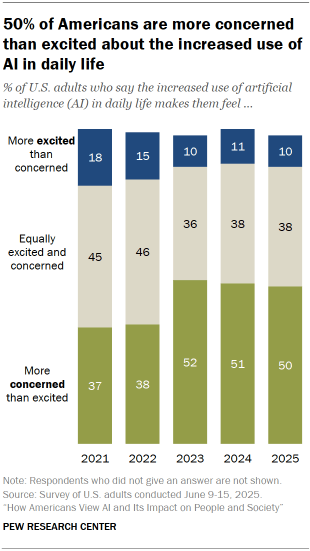

50% of Americans are more concerned than excited about the increased use AI in daily life (up from 37% in 2021)

51% believe strongly that “people’s ability to do things on their own will get worse because of using AI”

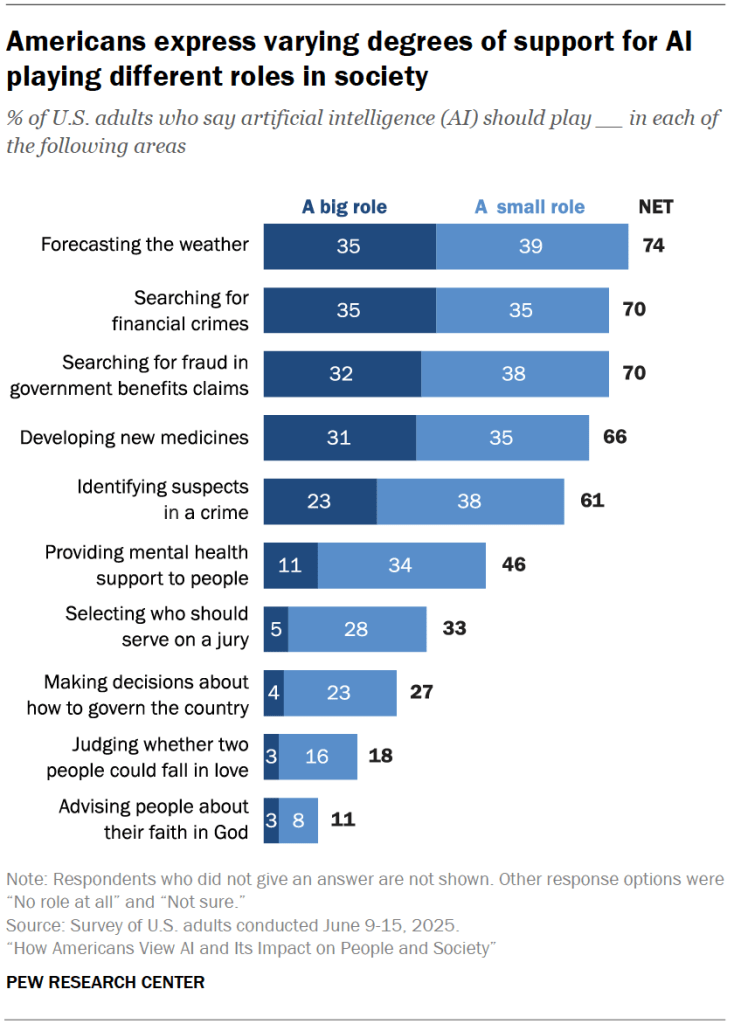

This data comes directly from a recent survey (n = 5,023) by the Pew Research Center on “How Americans View AI and Its Impact on People and Society.” The survey illuminates the strong disconnect between Silicon Valley and the rest of America.

The leaders in the field have taken note, but fail to seize the crux of the issue. We just saw two Super Bowl ads, though I doubt they will change the overall sentiment.

Claude’s ad was an attack at ChatGPT / Open AI and their inclusion of ads on the platform. More about the platform’s differentiation than about the concerns the public have around AI. I wonder how this reads to a person who spent most of their life with ads across all their platforms, whether that be Facebook, Instagram or TikTok. Are ads really the worse thing for a free service? (Especially since they represent the status quo.)

ChatGPT’s “You Can Just Build Anything” has a positive message but will fail to resonate broadly with the public, in my opinion. This fascination with building feels, in its current depiction, nerd-coded (respectfully).

The issue with most “build-anything” platform is that the actual work is in the determination of what to build. We’ve long harped about homemade software, but it will fail to materialize until people develop the proper language to put their quotidian issues into words. Homemade software remains artisanal or niche, the knack of hobbyists and tinkerers.

Overall, this has been a total comms failure.

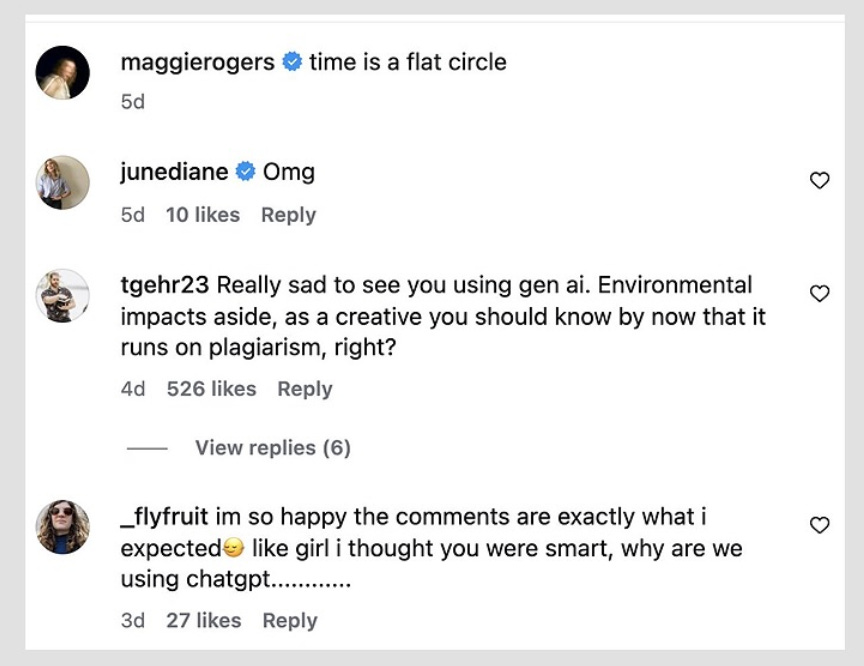

These days, any post on social media which may or may not use generative AI is swarmed by an angry mob usually expressing their disappointment or ire towards the artist or creator.

This could be a small vocal minority, who through their vociferousness is trying to sway public opinion, but no, this is a majority of Americans.

CEOs of AI companies are in a tough position. They need to balance hyping up the technology so they can raise a next massive round of funding whilst not scaring constituents (and their representatives) enough that they get over-regulated thereby capping their potential profits.

You need to tell funders that this technology will change everything, that the total addressable market is quasi-infinite, that you’re building AGI (artificial general intelligence, AI that is “smarter” than humans), that every company will buy AI to replace workers, that every child will have an AI tutor, that every weapon used by the US Army will use AI, etc, because that implies billions in revenue and that justifies the extreme valuations (at time of writing, the total market cap of AI companies is around ~24 trillion USD).

In the same breath, you need to tell Congress that you have this all under control and that they need not worry about regulation as it would stifle innovation. Worse, regulation would slow down technological progress and our sworn enemy China could surpass the United States in AI. If China had access to AGI, they could become the next geopolitical superpower and that would be highly detrimental to US hegemony.

The challenge with the AI communications strategy is due to this paradoxical set of beliefs, this ambiguous line being tip-toed. Importantly, this messaging addresses two specific groups: capital owners and policy makers. It fails to address the general public and tell it why it would benefit from the future where AI is ubiquitous or, even, frankly, why it should care that China has AGI.

What is the generally understood “benefit” of this AGI?

Instead of highlighting the benefits such as the Nobel Prize-winning AlphaFold, which can predict complex protein structures and will be used in drug discovery, or self-driving, which could reduce road accidents and deaths by a significant margins, in 2023, after the launch of Chat GPT, the tech CEOs decided to fear-monger on national television and in front of Congress. A strange choice.

On 60 Minutes, Sundar Pichai, CEO of Google, called it akin to the “discovery of fire and electricity” and warned that it was “keeping him up at night.” They spoke of the great societal changes coming, with an emphasis on job displacement, but not so much of benefits.

You don’t tell Americans they will lose their jobs. That is a huge no-no. As Dave Chappelle famously said, “You never get between a man and his bread.”

If people will lose their jobs and none of them are presented with a clear benefit to their lives, why should they like it?

If what they hear are complaints from their favorite musicians and actors about plagiarism and lack of compensation, why should they side with tech instead of their beloved entertainers?

Since 2023, the tech companies have made billions of dollars. But has the average person in Charlotte, in Missoula, in Boca Raton, in Huntington, in Burlington?

Does the average person actually benefit from this technology?

The advancement of this technology seems to be to the benefit of a specific class of asset owners and the prevailing sentiment is that this will not trickle down. People do not believe in the trickle-down anymore.

Because of the last 25 years of technology, they have been once bitten, and are, now, twice shy, in the words of George Michael.

Smart phones promised connection, they got isolation.

Social media promised interaction, they got polarization.

(The above is a little uncharitable, but my sense is this is how people feel.)

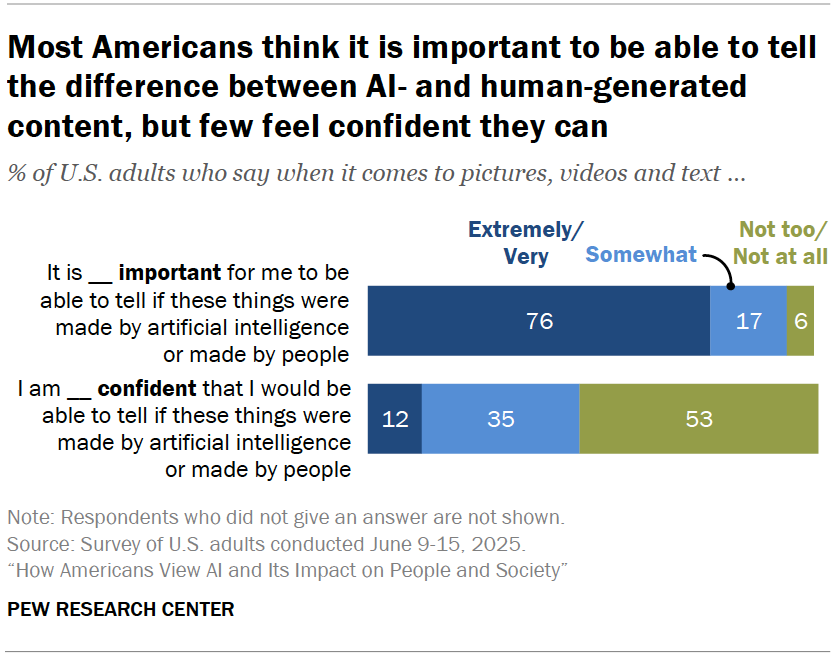

Now their feeds are being flooded with AI-generated content. They can see the negative impact. They can also, as below, foresee the upcoming negative impacts.

Put yourself in the shoes of the average American and ask: will the gargantuan financial gains obtained from this technology trickle down to the general public through redistribution or taxation?

The fundamental image issue with AI is that the risks are so abundantly clear. People already see the impact on education, on children, on relationships.

They already see generated content all over their social media feeds. They see loved ones being duped by AI.

All the while, the benefits remain unclear in the eyes of the public.

Tech companies have failed to paint a picture of what this AI future means for the average American and what it will look like.

Importantly, why should they want it?

AI;DR

https://thelastchord.substack.com/p/you-dont-know-what-ai-is